I have admired Philip Tetlock since, almost 30 years ago, he reviewed a book I had just written which contained one big and so far untested prediction, and gave it by far the most detailed, insightful and helpful assessment it had received among many warm but perfunctory reviews, mildly adding references to a few papers which, when I followed them up, showed me exactly how much I had missed out. His kindness made his critical points far more effective. (In a subsequent lecture tour I met up with one of the international affairs experts he had mentioned, who offered to work with me, though in the end I went on to other things, and consequently made no revision of the book).

Tetlock, P.E. (1986). Review of J. Thompson, Psychological aspects of nuclear war. British Journal of Social Psychology, 25, 78-79.

Now the Press are picking up his work on super-forecasting, which has major implications for how we go about anticipating and planning for future events, supposedly one of the features of high intelligence. Bright people should be particularly good at forecasting, shouldn’t they?

Superforecasting: The Art and Science of Prediction. Philip Tetlock and Dan Gardner. Sep 29, 2015

What has Tetlock found? First, that most pundit forecasts are unfalsifiable. Even time travel would not help you know if the predictions of these commentators had been met. They are at the low level of Nostradamus and contemporary journalism. Second, if you run a proper forecasting contest (not “will there be a stock market correction sometime soon” but “what will the Standard and Poor index stand at on 31 December 2015”) most commentators are “too busy” to participate. They do the broad brush stuff which gets well paid, not the nitty-gritty testable stuff that nerds do for fun.

In his 1953 essay on Tolstoy’s view of history, Isaiah Berlin drew a distinction which he intended to be no more than an intellectual game, though he later admitted that every classification throws light on something.

There is a line among the fragments of the Greek poet Archilochus which says: ‘The fox knows many things, but the hedgehog knows one big thing.’ Scholars have differed about the correct interpretation of these dark words, which may mean no more than that the fox, for all his cunning, is defeated by the hedgehog’s one defence. But, taken figuratively, the words can be made to yield a sense in which they mark one of the deepest differences which divide writers and thinkers, and, it may be, human beings in general. For there exists a great chasm between those, on one side, who relate everything to a single central vision, one system, less or more coherent or articulate, in terms of which they understand, think and feel – a single, universal, organising principle in terms of which alone all that they are and say has significance – and, on the other side, those who pursue many ends, often unrelated and even contradictory, connected, if at all, only in some de facto way, for some psychological or physiological cause, related to no moral or aesthetic principle. These last lead lives, perform acts and entertain ideas that are centrifugal rather than centripetal; their thought is scattered or diffused, moving on many levels, seizing upon the essence of a vast variety of experiences and objects for what they are in themselves, without, consciously or unconsciously, seeking to fit them into, or exclude them from, any one unchanging, all-embracing, sometimes self-contradictory and incomplete, at times fanatical, unitary inner vision. The first kind of intellectual and artistic personality belongs to the hedgehogs, the second to the foxes; and without insisting on a rigid classification, we may, without too much fear of contradiction, say that, in this sense, Dante belongs to the first category, Shakespeare to the second; Plato, Lucretius, Pascal, Hegel, Dostoevsky, Nietzsche, Ibsen, Proust are, in varying degrees, hedgehogs; Herodotus, Aristotle, Montaigne, Erasmus, Molière, Goethe, Pushkin, Balzac, Joyce are foxes.

(As you can see, Isaiah Berlin could write. He was also very kind, and a friend tells me stories about him, while pointing at the two prints Berlin gave him).

Tetlock has taken this distinction to heart as a classificatory system. Forecasters can have a specialist, narrow focus expertise (hedgehogs) or a broad overview, using plagiaristic combinations of other people’s deep knowledge plus their own feelings (foxes).

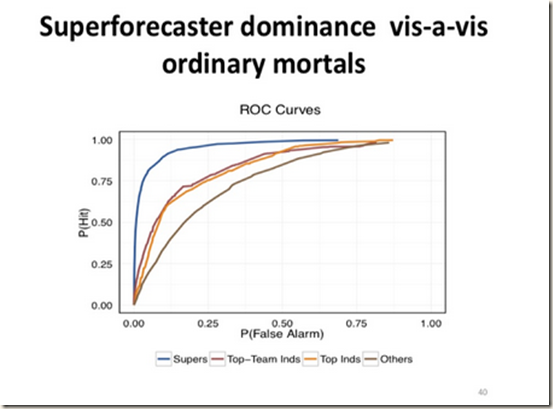

After conducting many prediction contests, Tetlock finds that some people are particularly accurate, and deserve the accolade of being superforecasters. Superforecasters could assign probabilities 400 days out (before the event) about as well as regular people could about eighty days out. Many of the superforecasters were quite public-spirited software engineers. Software engineers are quite over-represented among super-forecasters.

A surprisingly large percentage of our top performers do not come from social science backgrounds. They come from physical science, biological science, software. Software is quite overrepresented among our top performers. If you looked at the personality profile of super-forecasters and super-crossword puzzle players and various other gaming people, you would find some similarities.

The individual difference variables are continuous and they apply throughout the forecasting population. The higher you score on Raven’s matrixes the higher you score on active open-mindedness, the more interested you are in becoming granular, and the more you view forecasting as a skill that can be cultivated and is worth cultivating and devoting time to, those things drive performance across the spectrum, and whether you make the super-forecaster cut, which is rather arbitrary or not. There is a spirit of playfulness that is at work here. You don’t get that kind of effort from serious professionals for a $250 Amazon gift card. You get that kind of engagement because they’re intrinsically motivated; they’re curious about how far they can push this.

Comment: I think this makes sense. These super-forecasters are probably counters, not chatterers, that is, STEM not Verbal, with high fluid intelligence. Software has to work, and there are many, many ways in which it can go wrong. Murphy’s Law applies. Programs have to be tested to flush out errors, and you have to simulate the special situations users will create which can make an untested system crash. This background makes software engineers cautious, humble, and supremely focussed on “on budget, on time”.

Tetlock tried to boost forecasting accuracy by means of his Good Judgment Project, and found that his training techniques could boost accuracy by 50-70% from the group average. The project does this in the following ways:

The test of fluid intelligence was Raven’s Matrices. I promise you I began writing this post without knowing that. What I thought would be a little break from intelligence research turns out to prove the adage that intelligence runs through human life like carbon through biology.

You may have heard about the wisdom of crowds. I am with Dryden (1668) when he said: If by the people you understand the multitude, the hoi polloi, tis no matter what they think, they are sometimes in the right, sometimes in the wrong; their judgment is a mere lottery. As a general rule, crowds are in favour of war at the beginning of wars, and against them if they drag on, which most of them do.

So, the wisdom of crowds depends on the intelligence of the crowds, or more precisely, it is boosted by paying extra attention to intelligent crowd members. Where opinions are polarised, then one option is to use an algorithm to combat the centralising and emasculating effect of those clashing perspectives. This helps get useful predictions out of crowds, but does not help super-forecasters (who probably know how to combine conflicting opinions anyway).

An example of Kahneman based predictive training is this rule of thumb: The likelihood of a subset should not be greater than the likelihood of the set from which the subset has been derived.

What we’re trying to encourage in training is not only getting people to monitor their thought processes, but to listen to themselves think about how they think. That sounds dangerously like an infinite regress into nowhere, but the capacity to listen to yourself, talk to yourself, and decide whether you like what you’re hearing is very useful. It’s not something you can sustain neurologically for very long. It’s a fleeting achievement of consciousness, but it’s a valuable one and it’s relevant to super-forecasting.

The beauty of forecasting tournaments is that they’re pure accuracy games that impose an unusual monastic discipline on how people go about making probability estimates of the possible consequences of policy options. It’s a way of reducing escape clauses for the debaters, as well as reducing motivated reasoning room for the audience.

Regarding partisan pundits, Tetlock says:

High stakes partisans want to simplify an otherwise intolerably complicated world. They use attribute substitution a lot. They take hard questions and replace them with easy ones and they act as if the answers to the easy ones are answers to the hard ones. That is a very general tendency.

Does my side know the answer? is the really hard question. The easier one is, whom do I trust more to know the answer, my side or their side? I trust my side more to know the answer. Attribute substitution is a profound idea, and it allows us to think we know a lot of things that we don’t know. The net result of attribute substitution among both debaters and audiences is it makes it very hard to learn lessons from history that we weren’t already ideologically predisposed to learn because history hinges on counterfactuals

Tetlock is now focussing on the societal impact of his findings, hoping to improve the predictions on which decisions are based. The minimalist goal is to make it marginally more embarrassing to be incorrigibly close-minded, just marginally. The more ambitious goal is to make it substantially more embarrassing, and that requires talent and resources of the sort that academics like myself don’t possess. I don’t know how to create a TV show.

Tetlock has some advice for improving forecasts. Like most advice it has some disappointments, in that a researcher close to the material understands in detail what is meant by “strike the right balance” but the phrase itself is of little help, simply an irritating truism.

Ten Commandments for Aspiring Super-Forecasters

1 Triage. Concentrate on questions which lie in the Goldilocks Zone between Clocklike predictable and Cloudlike impossible.

2 Break seemingly intractable problems into tractable sub-problems. How many potential mates will a man find in London? Divide the total population by half to get the number who are women, then by those in his age range, those who are single, those of roughly the right age, those with a university degree, those who he will find attractive, those who will find him attractive, those who will be compatible and you end up with 26 women out of a population of 6 million.

3 Strike the right balance between inside and outside views. How often do things of this sort happen in situations of this sort? When estimating the time taken to complete a project, take the employee estimate with a pinch of salt, and the client estimate as a correction factor.

4 Strike the right balance between over-reacting and under-reacting to evidence. The best forecasters tend to be incremental belief updaters, slightly altering probability estimates. They also know when to jump fast.

5 Look for clashing causal forces in each problem. Understand both thesis and antithesis, summarize both so you recognise how they will develop, then attempt synthesis.

6 Strive to distinguish as many degrees of doubt as the problem permits but no more.

7 Strike the right balance between under- and overconfidence, between prudence and decisiveness.

8 Look for the errors behind your mistakes but beware of rearview mirror hindsight biases.

9 Bring out the best in others and let others bring out the best in you.

10 Master the error-balancing bicycle.

To get further into this, either read the book or look at his Edge masterclass (5 parts) in which he answers question and responds to suggestions.

http://edge.org/edge-master-class-2015-philip-tetlock-a-short-course-in-superforecasting

Comment

This is a first look at an engaging and important problem: how to perceive the world accurately enough to work out what will happen next. Intelligent beings need to be accurate much of the time. If Alex Wissner-Gross (2013) is right, intelligence is a thermodynamic process, and can spontaneously emerge from any organism’s attempt to maximise freedom of action in the future. The key ingredient seems to be the maximisation of future histories.

The key to decision making is to keep one’s options open, the most important option being staying alive.

Without googling, tell me who said this:

ReplyDeleteAll the business of war, and indeed all the business of life, is to endeavour to find out what you don't know by what you do; that's what I called "guessing what was at the other side of the hill."

Wellington?

DeleteGold medal for Thompson!

DeleteGratefully received, but believe me, nothing except a battle lost can be half so melancholy as a battle won.

DeleteIf you were a French general, what was just over the crest of the hill often turned out to be Wellington's army, lying in wait for you to march up that hill.

DeleteYes, having surveyed the whole terrain a year before, and chosen where to place his troops.

DeleteOf topic, but the Add health education genetic risk score (GRS_EDU) variable is now available to registered users. The application form doesn't seem to be yet available at the Add Health cite: http://www.cpc.unc.edu/projects/addhealth/news/add-health-data-release But Greg Christensen sent a copy, which was sent to him by Ben Domingue, so one is floating around. I didn't realize that Rietveld et al.'s (2013).alleles were used. We already know the between group frequency of these, so I'm not sure as to the utility of these. It would be nice to see if they could explain some of the covariance between color and PPVT in the AA population, but I don't imagine anyone could be sold on doing that analysis.

ReplyDeleteJohn, that is an interesting development. I will see if others are willing to to apply so as to use it.

ReplyDeleteI'd say superlative software engineers are BOTH counters and chatterers. Software is a team sport these days. Software recruiting blogs emphasize the importance of verbal intelligence: https://business.linkedin.com/talent-solutions/blog/2015/04/how-to-screen-for-the-perfect-software-engineer-a-k-a-sams.

ReplyDeleteAnd notice that the screen for verbal/interpersonal skills is listed first on this flow chart--3/4 do not make it past that screen. http://www.toptal.com/freelance/in-search-of-the-elite-few-finding-and-hiring-the-best-developers-in-the-industry

Yes, bright persons first, then tune as best you can. Accenture (then Anderson Consulting) did this 30 years ago.

ReplyDeleteMy impression (as a "superforecaster" who knows a lot but not all other "supers") is that profession the most overrepresented among "superforecasters" is lawyers. Programmers - perhaps but not as much as lawyers. Surprising to me.

ReplyDeleteDK, welcome and congratulations. As to lawyers, that is very surprising to me as well. My expectation would be that lawyers were bound by precedent, and would have a formalistic approach, not suited to prediction. Have you got more data on this, or would Philip Tetlock (who will be interviewed by the FT in London November 10) have all the data on professions?

ReplyDeleteI have limited data that definitely says "overrepresented" but I can't be categorical about the entire field. For now, Tetlock does not know for sure either. But since there is now commercial off-shot that will involve "supers" prominently, there will be, I suspect, an effort to establish everyone's expertise and be able to present an overall profile to the clients.

DeleteLawyers or programmers or scientists or engineers, one thing that's very definitely overrepresented is people with advanced degrees. So the narrative of some journalists about average schmacks off the street is not entirely accurate.

I found a lawyer/programmer who blogs the theory that lawyers and developers are similar:

Deletehttp://www.javacodegeeks.com/2014/05/lawyers-and-developers-not-so-different.html

At the end of the day, both law and computing is about wrapping abstractions around very complex interactions such that the rules are comprehensible and the outcomes are predictable.

At the end of the day, both law and computing are about giving individuals the ability to reason about the behavior of systems (people, groups, computers) based on a wide variety of inputs that cannot all be conceived of when the systems/laws are initially developed. Both the law and computer systems have ways of dealing with new, unexpected inputs: judges/common law and system updates.

I once was in charge of getting finished the long contract selling the software division of the company where I worked to Oracle, the huge software company. We took about 4 programmers and 4 lawyers out to Silicon Valley and worked for 9 days with a similar team from Oracle.

DeleteAfter a couple of days, I suggested that writing a contract was much like computer programming, just using 15th Century English with a lot of French words. Most of what we were doing was working through each sides' proposed if-then-else statements to see if the other side was comfortable with the consequences, and then dreaming up new if-then-else rules to follow when the outcome was Else.

The programmers from both firms agreed with me, while the lawyers thought I was being silly.

Fascinating. Although I can see the similarity now, the "surface" aspect of legal contracts, and taxation law, entirely defeats me. A flow chart, or simple to moderate difficulty program code is more congenial. I can arrange the sub-routines and then, with help, run it a few times. The quote I remember best about the Law, is that it is "born out of despair at human nature". I like that. It gives psychology a chance.

DeleteHere's a superforecast. Almost every article you'll ever read about fusion power will imply that it's only (about) forty years from becoming practical. At least, they almost all have during my lifetime.

ReplyDeleteOK, let's get specific. Will fusion power be achieved by 29 October 2055? Assign a probability

ReplyDeleteThere was this joke in the USSR: family watches TV and some talking head proclaim that, in line with the Party assurances, "communism is already on the horizon" (Russian expression for something's close enough). A child asks "Papa, what is horizon?" - "Oh, dear, horizon is an apparent line that can never be reached".

DeleteFor as long as I remember, that's the story with fusion power and AI. The question, I assume, is about commercial fusion power that's at least ready for worldwide adoption for routine electricity generation. Well, without knowing much about where it stands today, I'd start with p~0.1. Containment is a formidable problem that may never be solved safely or cost-efficiently.

My forecast isn't about fusion power, it's about what newspaper articles (or the future equivalent) will say about fusion power. That's much safer ground.

DeleteP.S. What possible use could it be to assign a probability? How then could a future analyst know whether I had been right or wrong? What he'd need is a simple yay or nay.

dearieme, there are many very good reasons to assign probabilities. It's all outlined very well in the book. Or, if you are close to London, you can register for a day of talks by a bunch of "superforecasters": http://www.eventbrite.co.uk/e/superforecasting-and-geopolitical-intelligence-who-can-predict-the-future-and-how-tickets-17800174802

Delete"there are many very good reasons to assign probabilities." That's as may be but there's a conclusive disadvantage: you can't know retrospectively whether the forecaster was right or wrong.

DeleteArgentine version of the USSR joke: Argentina is the country of the future.

DeleteAI is already with us, but not in the way originally conceived. It will creep up on us further, not particularly noticed.

Fusion nuclear power: I feel it might be a bridge too far. Things are more manageable further down the thermodynamic chain.

you can't know retrospectively whether the forecaster was right or wrong

ReplyDeleteNot on any single question - no, of course you cannot. which is actually like what the world around us is - all probabilities. I trust you find weather forecasts at least somewhat useful? Statistical physics still makes sense?

So Tetlock&Co tracked ~ 150 questions every year for four years and recorded accuracies of various participants and groups. Some tended to do better than others. Some of those tended to do better every year, without reverting to the mean. If your policy depends on being right in aggregate, there is a clear advantage to go with the better forecaster.

There's a non sequitur in what you say. It would be perfectly possible to have lots of questions but demand firm yes/no answers.

DeleteIf you demand firm yes/no answers, I would want to know at what probability level you decide to say yes or no.

DeleteThe alternative to probabilities that is easier to aggregate is the bookie method. Assign right answers varying rewards based upon how the public is voting. More reward for correctly picking a 20-1 longshot than a 3-2 favorite. But, no reward for incorrectly picking a 20-1 longshot.

DeleteRewards have little to do with aggregation. Probabilities are super easy to aggregate and in fact, as Tetlock described in his Edge series, some aggregations algorithms work better than others. The rewards have to do with incentives to be accurate. Tetlock's subjects had little monetary incentives but their teams competed against each other and that apparently was good enough. ("Superforecasters" are a competitive bunch). The scoring in the competition was with "Brier scores", something routinely used in weather forecasting (see Wikipedia, etc). It does have properties somewhat similar to what you describe.

DeleteFascinating stuff. This should be goal of social science, not whatever they're doing now.

ReplyDeleteIt would be perfectly possible to have lots of questions but demand firm yes/no answers.

ReplyDeleteOf course it is perfectly possible. But ridiculously impractical. At infinitely high N, the yes/no (assuming Yes means p > 0.5 and No means p <0.5) answers will asymptotically converge on true belief of the crowd that is forecasting. Or one can simply ask each individual of the crowd to forecast his own true belief, in which case much fewer forecasters are needed to derive an aggregated crowd forecast.

If it is a forecast that one is interested in, under any condition of limited resources asking for firm yes/no answers is plainly foolish.

The idea of "firm yes/no" reminded me of another old Soviet joke. Women's logic:

Q. What is the probability that there is a white elephant standing right now in front of your window?

A. 50%

Q. Really, how come?

A. Self-evident! The elephant is either there or it is not!

Off topic

ReplyDeleteWomen tend to score better in reading ability than men, the difference seems higher than math ability where men will be better than women.

Ok, but

and among ashkenazis***

My hypothesis for explain ''jewish advantage''

higher % of verbally and mentalisticaly gifted men than any other ''race'!!

In non-jewish caucasian populations, women will tend to surpass men in reading and mentalistic ability.

But in ashkenazi populations, this differences will be lower AND more men will be highly gifted in verbal and mentalistic skills. And men tend to be better than women or more men are ultimate smart than women could be.

If you guys understand my macacarronic english!!

The focus on Ravens and math ability neglects the importance of specific experience and subject matter expertise. There are lots of circumstances where the latter matter. I wouldn't want an economist to tell me how likely crops in my garden are to survive.

ReplyDeleteAll I can report is that superforecasters had to make predictions about a large number of matters, well outside specific subject matter boundaries. That was the point of the tournament. The key drivers are the ones shown above.

ReplyDelete