It takes a certain self confidence to publish a paper with a four word title, particularly when those four words include “nature” and “nurture”. The paper has four authors, so presumably they were allowed to chose a word each. On the other hand, a paper entitled: “Analysis of reading comprehension in monozygotic and dizygotic twins, distinguishing between the most competent 5% and the less competent 95%” would lack rhetorical flourish. It would also have sold the paper short, for the authors have done more than just report a result: they have explained many of the debates surrounding genetic research in a clear and helpful manner. They have demonstrated expertise as scholars, and patience as explainers.

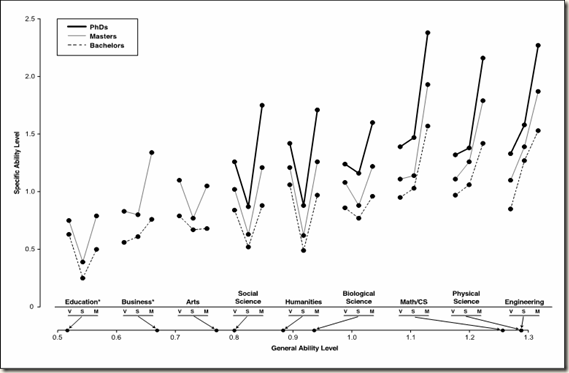

Robert Plomin, Nicholas Shakeshaft, Andrew McMillan and Maciej Trzaskowski all work at the Institute of Psychiatry, a Camberwell institution of uncertain architecture, where they have been tracking twins for many years. They still have over 10,000 twins taking their tests, including four different reading tests. For those of you in a hurry, the answers are as follows: More than half of the difference between expert and normal readers is genetic. Expert readers show the same genetic effects as normal readers. Less than a fifth of the expert-normal difference is due to shared environment.

So, you can clock this one up as yet another paper showing that genetics rule. And so they, very probably, do, given certain favourable circumstances.

The authors are primarily interested in the origins of expertise as it exists in the world (“what is”) rather than investigating the extent to which training can improve performance under experimental conditions (“what could be”). “We used the twin method to investigate the genetic and environmental origins of exceptional performance in reading, a skill that is a major focus of educational training in the early school years. Selecting “reading experts” as the top 5% from a sample of 10,000 12-year-old twins (1931 monozygotic pairs, 1714 same-sex dizygotic, and 1668 opposite-sex dizygotic) assessed on a battery of reading tests, here are the three main findings in a little more detail. First, they found that genetic factors account for more than half of the difference in performance between expert and normal readers. Second, their results suggest that reading expertise is the quantitative extreme of the same genetic and environmental factors that affect reading performance for normal readers. Third, growing up in the same family and attending the same schools account for less than a fifth of the difference between expert and normal readers.”

As regards the old style nature and nurture debate about expertise, the authors have found two extreme environmentalists, Ericsson and Howe, but no extreme hereditarians. “In all areas of the behavioral sciences, genetic influence has been shown to account for substantial variance, but this same research provides strong evidence for the importance of environment as well. Heritability, which is an effect size index of the proportion of phenotypic variance that is accounted for by genetic variance, is typically between 30 and 60% across psychological traits, which means that 40–70% of the variance is not genetic in origin”.

“The critical point is this: There is no necessary connection between ‘what is’ and ‘what could be’. That is, even if the difference between experts' performance and the performance of the rest of the population were due solely to genetic differences (what is), a new environmental intervention such as a new training regime could still greatly improve performance (what could be). For example, although obesity is highly heritable, if people stop eating they will lose weight; moreover, a novel environmental intervention such as bariatric surgery can dramatically reduce extreme obesity.”

Of course, this is not an entirely convincing example, because bariatric surgery can fail if, as often happens, patients circumvent it by changing to smaller but far more frequent meals, but the point still has potential. Personally, I think they are being too kind to putative new training regimes, because so few of them ever amount to much when compared with ordinary training regimes. Reading schemes are a dime a dozen: the have to be used quickly before their special effects wear out.

They continue: “Showing that diets and other interventions can make a difference (what could be) tells us nothing about the genetic and environmental origins of obesity as it exists in the world (what is). In the same way, finding that training improves performance (what could be) tells us nothing about the genetic and environmental etiology of existing performance differences in the population (what is). Although there is no necessary relationship between ‘what is’ and ‘what could be’, some of the most far-reaching questions about the acquisition of expertise lie at the interface between ‘what is’ and ‘what could be’. “

Plomin has always argued that it would be wrong to talk about genetic influence in terms of genetic constraints: new circumstances or new methods of instruction may change the picture considerably or, at the very least, it is conceivable that they could.

“Heritability is a descriptive statistic that describes the average extent to which genetic differences (i.e., differences in DNA sequence) between individuals account for phenotypic differences on a particular measure in a particular sample with its particular mix of genetic and environmental influences at a particular developmental age and secular time. In other words, heritability describes ‘what is’ in a particular sample; it does not connote innateness or immutability. Nor does it indicate the mechanisms by which DNA differences affect individual differences in performance. By itself, DNA cannot do anything — it requires an environment inside and outside the body to have its effects. Access to experience and practice is one of the many pathways between genes and behavior.”

“The surprise from research using genetically sensitive designs in many domains is that shared environmental effects are so small”

Personally, I think this is a very important point, and damages the Mark I environmentalist position, which has always laid stress on the presumed shared advantage conferred by family and school.

What is inherited is DNA sequence variation. The DNA sequence in the single cell with which your life began is the same DNA sequence in all of the trillions of cells in your body for the rest of your life. Nothing changes your DNA sequence variation — not environment, biology or behavior. What changes is the rate of transcription of your DNA sequence into RNA. For example, you are changing the transcription of your DNA that codes for neurotransmitters as you read this sentence. If your inherited DNA sequence coding for one of these neurotransmitters differs functionally from other individuals, this coding difference will appear every time that your DNA is transcribed into RNA — as you read, think and practice. Transcription of DNA into RNA is a response to the environment; what is inherited is DNA sequence variation. All of the other -omics in between genomics and behavior – epigenomics, transcriptomics, proteomics – are important for understanding pathways between genes and individual differences in outcomes, but they are not inherited from parent to offspring. For this reason, DNA sequence variation is in a causal class of its own in the sense that there is no direction of effects issue when it comes to correlations between genes and behavior. In other words, correlations between DNA sequence variation and behavior are ultimately causal from genes to behavior because our behavior and experiences do not change DNA sequence variation. Other correlations between behavior and biology, including all the -omics and the brain, raise questions about the direction of effects, that is, whether the correlation is caused by the effects of behavior on biology or vice versa.”

The authors also report on the work of Fox, Hershberger and Bouchard, 1996 who used a twin design to look at training effects on a motor learning task. All the twins improved, rising from 15% on target to 60% on target, some twins improved more than others, and there was a genetic influence on the outcome of training, such that the estimated heritability seemed to have risen by the end of training.

If we can ever attain the ideal of “a level playing field” in life then we will be able to see who can really run faster (and the proportion of variance accounted for by genetic influences will very probably increase, not decrease because the good environment will apparently fall out of the equation).

Life events. “Life events have been used as environmental measures in thousands of studies, but life events are not measures of an objective environment ‘out there’ that happens passively to people. The likelihood that we will experience problems with relationships, financial disruption, and other life events – and nearly all other environmental measures used in psychological research – depends in part on genetically influenced behavioral traits (McAdams, Gregory, & Eley, 2013). A review of 55 independent genetic studies using environmental measures found an average heritability of 27% across 35 different environmental measures (Kendler & Baker, 2007). There are few measures of psychologically relevant environments that do not show genetic influence when investigated in adequately powered genetically sensitive studies; significant genetic influence has been reported for some unlikely experiences such as childhood accidents (Phillips & Matheny, 1995), bullying victimization (Bowes et al., 2013), and children's television viewing (Plomin, Corley, DeFries, & Fulker, 1990).

Genotype-phenotype correlation. “Consider the development of expertise in reading. Children who are expert readers are likely to have parents who read well and provide their children with both genes and an environment conducive to the development of reading (passive genotype–environment correlation). Children with a genetic propensity towards reading might also be picked out at school and given special opportunities (evocative type). Even if no one does anything about their reading, children with genetic proclivities towards reading can seek out their own enriched reading environments, for example, by selecting friends who like to read, or simply by reading more books (active type). We suggest that such genotype–environment correlational processes are important mechanisms by which children develop expertise in other domains as well, such as sports and music.

Specifically in relation to the development of expertise, genotype–environment correlation research leads to an active model of experience in which children select, modify, and create their own environments in part on the basis of their genetic propensities. Rather than thinking about the development of expertise as the passive acquisition of an imposed one-size-fits-all training regime, this active model of genetically guided experience leads to a more individualized approach. The essence of the active model of experience is choice — allowing children to sample an extensive menu of experiences so that they can discover their appetites as well as aptitudes. This active model of genotype–environment correlation might be more cost-effective in fostering expertise than the passive training model – and it will certainly be more fun for parents as well as children – because if all goes well, children will try to become the best they can be because they want to, not because they are made to do it.”

As Jensen said long ago, children will probably do better if offered an educational cafeteria, and not a set meal.

I have quoted this paper at length because I think it clarifies many issues. Short title, long on content. It is a free access paper so have a look at it yourself.

http://dx.doi.org.libproxy.ucl.ac.uk/10.1016/j.intell.2013.06.008